TLDR: How can we represent edits in neural networks?

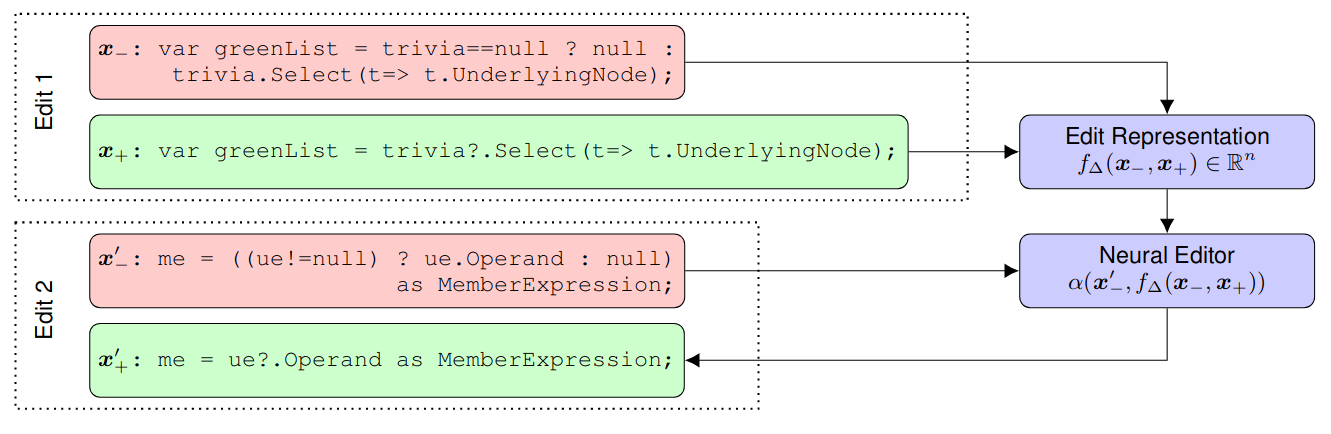

We introduce the problem of learning distributed representations of edits. By combining a “neural editor” with an “edit encoder”, our models learn to represent the salient information of an edit and can be used to apply edits to new inputs. We experiment on natural language and source code edit data. Our evaluation yields promising results that suggest that our neural network models learn to capture the structure and semantics of edits. We hope that this interesting task and data source will inspire other researchers to work further on this problem.